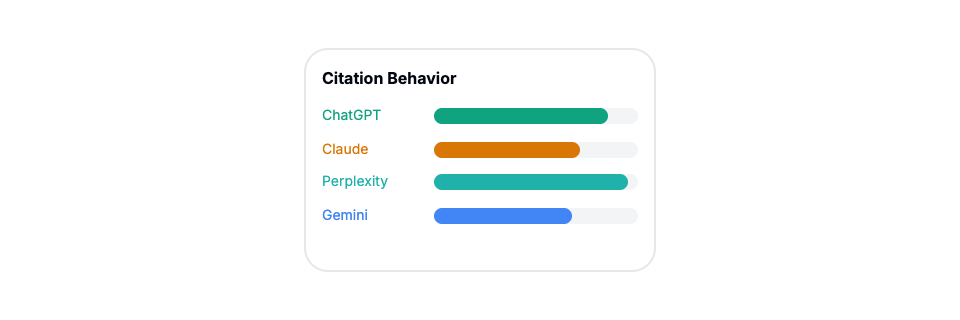

Analysis of 118K+ answers reveals dramatic differences in AI citation behavior: Perplexity averages 21.87 citations per question while ChatGPT uses 7.92, and OpenAI is the only model citing Wikipedia significantly at 4.8%.

Other Articles

The 2026 GEO Business Case: How to Measure ROI and Secure Budget for AI Search

A data-driven framework for marketing leaders to measure GEO performance, prove ROI to executives, and secure budget for AI search optimization in 2026.

The Complete Guide to GEO Metrics

The definitive guide to measuring AI visibility. Core metrics, industry benchmarks from 500+ brands, advanced insights, and actionable optimization strategies for ChatGPT, Claude, Perplexity, and other AI platforms.